I saw that IKEA launched new smart home products with affordable price and decided to play with them during Holiday season. These newer IKEA devices use Matter over Thread – the “new” and “universal” smart home standard that promises interoperability across ecosystems.

The vision was simple: control all my devices from a single interface, with local control (no cloud dependency), and the flexibility to switch ecosystems if needed. Matter makes this possible by allowing devices to be shared across multiple controllers simultaneously.

Why Home Assistant Over Apple Home?

While Apple Home works well within the Apple ecosystem, I chose to try Home Assistant for several reasons:

- Open source and extensible – Thousands of integrations, custom automations, maximized hackability & HACS.

- No vendor lock-in – Works with virtually any smart home protocol (Matter, Zigbee, Z-Wave, WiFi, etc.) and almost any device that can be controlled remotely.

- Advanced automations – Far more powerful than Apple’s limited automation capabilities

- Local control – Everything runs on my own hardware, no cloud required. I already have NAS with bunch of services running locally in Docker.

- Matter multi-admin support – Devices can be controlled by both Apple Home AND Home Assistant simultaneously, so transition is expected to be smooth.

Running Home Assistant on a NAS

Instead of buying dedicated hardware (like Home Assistant Green or a Raspberry Pi), I decided to run Home Assistant on my existing UGREEN DXP4800PLUS NAS. This approach has several advantages, at least for me:

- Cost savings – No additional hardware purchase, only IKEA lamps, switches and sensors

- Reliability – NAS systems are designed to run 24/7

- Consolidation – One device to maintain instead of multiple

Docker vs VM

For running Home Assistant on a NAS, you have two options: Docker containers or a full Virtual Machine. I chose Docker because:

- Lower resource usage – Containers share the host kernel

- Maintainability – I have a private git repo with all my docker compose files and configuration

- Simpler network configuration – Direct access to host network for mDNS/multicast (no need to configure network bridge on NAS)

The main downside of Docker is that some Home Assistant add-ons don’t work (they require Home Assistant OS). However, you can run most services as separate containers, which is what we’ll do with the Matter server.

Reusing Apple’s Thread Border Router

Here’s where it gets interesting. Thread devices need a Thread Border Router to communicate with your IP network. You could buy a dedicated one, like:

But if you already have Apple devices like a HomePod Mini or Apple TV 4K, they already function as Thread Border Routers! The idea was simple: let Apple’s border router handle the Thread mesh networking, while Home Assistant controls the devices via Matter multi-admin.

This should work because:

- Matter supports multi-admin (device joins multiple controllers)

- Thread Border Routers advertise routes via IPv6 Router Advertisements

- Any device on the network should be able to reach Thread devices through the border router

Should being the operative word…

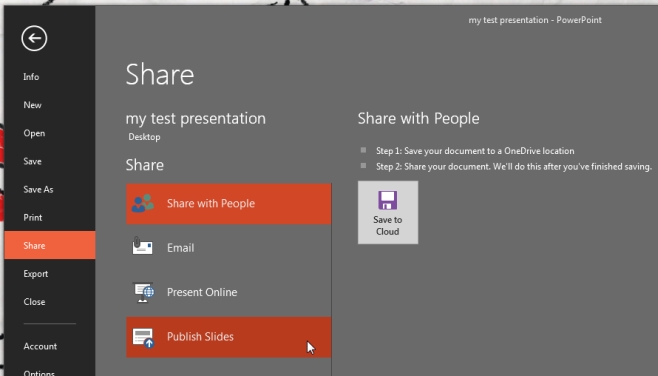

The Setup: Docker Compose

Here’s the docker-compose configuration for running Home Assistant and Matter Server:

# docker-compose.yaml

services:

homeassistant:

container_name: homeassistant

image: "ghcr.io/home-assistant/home-assistant:stable"

volumes:

- ./config:/config

- /etc/localtime:/etc/localtime:ro

- /run/dbus:/run/dbus:ro

restart: unless-stopped

privileged: true

network_mode: host

environment:

TZ: Europe/Warsaw

matter-server:

container_name: matter-server

image: ghcr.io/home-assistant-libs/python-matter-server:stable

restart: unless-stopped

security_opt:

- apparmor:unconfined

volumes:

- ./matter-data:/data

- /run/dbus:/run/dbus:ro

network_mode: host

command: --storage-path /data --primary-interface eth0

Key points:

network_mode: hostis essential – Matter requires mDNS/multicast which doesn’t work well with Docker’s bridge networking--primary-interface eth0tells the Matter server which network interface to use (assuming you also plugged you ethernet cable to 1st post)--storage-path /dataensures device pairings persist across container restarts

The Problem: Commissioning Fails

After setting everything up, I tried to share my device from Apple Home to Home Assistant. The process:

- Open Apple Home → Select device → Turn On Pairing Mode

- Open Home Assistant → Add Matter device → Enter pairing code

But commissioning kept failing with these errors in the matter-server logs:

CHIP_ERROR [chip.native.CTL] Discovery timed out

CHIP_ERROR [chip.native.SC] PASESession timed out while waiting for a response from the peer. Expected message type was 33

WiFi-based Matter devices worked fine – only Thread devices failed. Something was wrong with the network path between my NAS and the Thread devices.

Debugging: The Investigation

Step 1: Verify mDNS Discovery Works

First, I checked if the NAS could even see the Thread Border Routers and Matter devices:

# SSH into the NAS

ssh [email protected]

# Check if Thread Border Routers are visible

avahi-browse -t _meshcop._udp

Output:

+ eth0 IPv4 Apple TV 4K _meshcop._udp local

+ eth0 IPv6 Apple TV 4K _meshcop._udp local

Good – the NAS can see the Apple Border Routers via mDNS.

Step 2: Check Device in Commissioning Mode

While the device was in pairing mode, I checked if it was advertising:

avahi-browse -r _matterc._udp

Output:

hostname = [264DA88A7891BAE6.local]

address = [fd40:aff8:a38d:0:8f51:64dc:1b6e:f8b0]

port = [5540]

txt = ["VP=4476+36873"]

The device was visible and advertising its IPv6 address. But notice the address: fd40:aff8:a38d:... – this is a different IPv6 prefix than my home network!

Step 3: Understanding the Network Topology

This revealed the architecture:

Home Network (fd9c:3a44:26e2:453f::/64)

│

├── NAS (fd9c:3a44:26e2:453f:...)

├── PC (fd9c:3a44:26e2:453f:...)

└── Apple Border Router

│

└── Thread Mesh Network (fd40:aff8:a38d::/64)

│

└── IKEA DEVICE (fd40:aff8:a38d:0:8f51:64dc:1b6e:f8b0)

Thread devices live on a separate IPv6 subnet. The Border Router should advertise routes to this subnet so other devices can reach it.

Step 4: Test Connectivity from PC vs NAS

Here’s where it got interesting. From my PC:

# On PC

ping -6 -c 3 fd40:aff8:a38d:0:8f51:64dc:1b6e:f8b0

Output:

64 bytes from fd40:aff8:a38d:0:8f51:64dc:1b6e:f8b0: icmp_seq=1 ttl=63 time=25.6 ms

64 bytes from fd40:aff8:a38d:0:8f51:64dc:1b6e:f8b0: icmp_seq=2 ttl=63 time=25.2 ms

64 bytes from fd40:aff8:a38d:0:8f51:64dc:1b6e:f8b0: icmp_seq=3 ttl=63 time=30.2 ms

It works! My PC can reach the Thread device.

But from the NAS:

# On NAS

sudo ping -6 -c 3 fd40:aff8:a38d:0:8f51:64dc:1b6e:f8b0

Output:

3 packets transmitted, 0 received, 100% packet loss

The NAS cannot reach the Thread device! Same network, same device, different results.

Step 5: Compare IPv6 Routes

On my PC:

ip -6 route show | grep fd40

Output:

fd40:aff8:a38d::/64 via fe80::143e:4077:22b:562e dev enp101s0 proto ra

fd40:aff8:a38d::/64 via fe80::49e:d415:b2e8:5904 dev enp101s0 proto ra

The PC has routes to the Thread network, learned via Router Advertisement (proto ra).

On the NAS:

ip -6 route show | grep fd40

Output: (empty)

The NAS has no route to the Thread network! It’s not processing the Router Advertisements from the Apple Border Routers.

Step 6: Find the Root Cause

The key setting is accept_ra_rt_info_max_plen – this controls whether the kernel accepts Route Information Options (RIO) from Router Advertisements:

cat /proc/sys/net/ipv6/conf/eth0/accept_ra_rt_info_max_plen

On NAS: 0 (disabled)

Required: 64 (accept routes with prefix length up to 64)

Root cause found! The NAS kernel was configured to ignore IPv6 Route Information Options, so it never learned how to reach the Thread network.

The Solution

Temporary Fix (Testing)

sudo sysctl -w net.ipv6.conf.eth0.accept_ra_rt_info_max_plen=64

After running this, verify the route appears:

ip -6 route show | grep fd40

# Should now show: fd40:aff8:a38d::/64 via fe80::... dev eth0 proto ra

And test connectivity:

sudo ping -6 -c 3 fd40:aff8:a38d:0:8f51:64dc:1b6e:f8b0

# Should now work!

Permanent Fix: Systemd Service

/etc/sysctl.d/ approach does not work on UGreen OS (and likely other NAS systems like QNAP, Synology). These systems restore /etc/ from a read-only image on every boot.Create a systemd service that applies the setting after boot:

sudo tee /etc/systemd/system/thread-routing.service << 'EOF'

[Unit]

Description=Enable IPv6 RIO for Thread Border Routers (Matter/HomeAssistant)

After=network-online.target

Wants=network-online.target

[Service]

Type=oneshot

ExecStart=/sbin/sysctl -w net.ipv6.conf.eth0.accept_ra_rt_info_max_plen=64

ExecStart=/sbin/sysctl -w net.ipv6.conf.all.accept_ra_rt_info_max_plen=64

RemainAfterExit=yes

[Install]

WantedBy=multi-user.target

EOF

# Enable and start the service

sudo systemctl daemon-reload

sudo systemctl enable thread-routing.service

sudo systemctl start thread-routing.service

Verify After Reboot

sudo reboot

# After reboot:

systemctl status thread-routing.service

# Should show: active (exited)

cat /proc/sys/net/ipv6/conf/eth0/accept_ra_rt_info_max_plen

# Should show: 64

ip -6 route show | grep fd40

# Should show routes to Thread network

Summary

Running Home Assistant on a NAS with Matter Thread devices is absolutely possible – you can even reuse Apple’s Thread Border Routers instead of buying dedicated hardware. But there’s a catch: NAS systems have conservative IPv6 settings that prevent them from learning routes to the Thread network.

Quick Diagnostic Commands

| Check | Command |

|---|---|

| See Thread Border Routers | avahi-browse -t _meshcop._udp |

| See device in pairing mode | avahi-browse -r _matterc._udp |

| Check route to Thread network | ip -6 route show | grep fd40 |

| Check RIO setting | cat /proc/sys/net/ipv6/conf/eth0/accept_ra_rt_info_max_plen |

| Test connectivity | ping -6 <device-ipv6> |

Key Lessons

- mDNS discovery ≠ connectivity – Just because you can see a device doesn’t mean you can reach it

- Thread uses separate IPv6 prefix – Devices aren’t on your home network, they’re on the Thread mesh

- Compare working vs broken – My PC worked, NAS didn’t – that pointed to a local configuration issue

- NAS systems are quirky – Don’t assume standard Linux configs will persist; use systemd services

- Router Advertisements matter – The

accept_ra_rt_info_max_plensetting is crucial for Thread routing

With this fix in place, I can now commission Thread devices to Home Assistant while keeping them in Apple Home too. The best of both worlds!

TL;TR

TL;TR